Planning your testing effort for the next project: How much time do you have? How many people do you have? _How_ big was that scope again?

As testing is virtually always constrained with not enough time, not enough people, not enough infrastructure, etc it is vital that the testing effort is able to prioritize what is critical and what is not so the scarce resources can be applied in the most valuable manner possible.

New functionality is where the excitement is, and definitely where there is a high probability of defects. And in the face of tight budgets and schedules, even new functionality testing is often short-changed.

But is it really the only place of high risk? How many times has the code been changed and something seemed to break somewhere else? What if the impact is a data integrity issue? What if the collateral damage is deep into a different business process? What if performance of key tasks degrades after making a “small” change in a supposedly unrelated area of the system?

Regression testing is intended to uncover such errors in areas of the system that were previously considered to be working by re-testing the system after changes have been made (eg: defect fixes or new functionality).

Challenges

Regression testing is typically a challenge on any project because of ever-present time and resource constraints. However the neglect of regression testing builds a potential for undetected failure into the system. This potential typically only increases as time passes.

The following are some more specific reasons why regression testing is often left behind in the project plan.

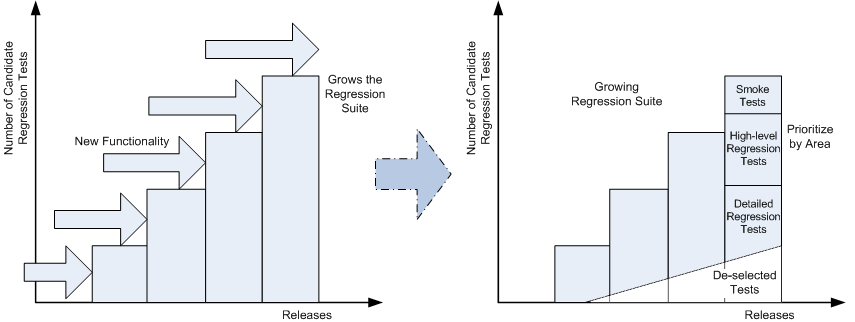

Size of system grows while projects remain similarly constrained:

- The potential size of the regression suite is increasing over time as the system grows in terms of functionality and complexity

- As each new release typically faces the same constraints in terms of budget, schedule, team size a smaller and smaller percent of prior functionality gets re-tested because of the focus on new functionality and the growing size of the regression suite

Complexity of system grows while testing practices remain static:

- The inherent complexity of the system is increasing as more and more code is written for new features and the interactions of the system increase

- The system-level dependencies and interactions become less and less understood on average by each individual team member

- System-level knowledge of the system and how to test it typically remains uncaptured as investment in appropriately formalized tests is haphazard or unsustained, resulting in insufficient test coverage of the system or the inability to even gauge the degree of actual coverage

Automation efforts are prone to failure:

- Investments into test automation efforts for regression testing are prone to failure over time because of perceived maintenance overheads, lack of an ROI-driven approach, lack of the right-skilled resources, lack of the understanding of what exactly is being tested, etc

- Rapid development cycles do not traditionally encourage/allow for the time to create well-designed test automation suites (eg: effort is perceived as slowing the ability to release the current new functionality, or an expense on the project when there will be little direct benefit to the project)

Solution

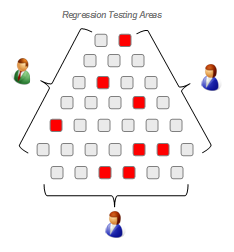

One of the crucial components to being “smart” in a constrained situation is to be able to correctly decide on or select which things are “must-haves”, which are “should-haves” and which are “nice-to-haves”.

For any testing effort on a maturing system, a regression testing approach is definitely a “must-have” in order to address that risk of collateral damage or service degradation. At the same time, you should not expect to have to rerun all the tests you did when the functionality was new. Thoughtfully sample the system functionality and associated tests for areas where a regression would matter most. If/when such issues are found deeper testing can take place at that point.

In order to define what a reasonable sample of the tests should be:

- Identify traditionally risky areas in terms of criticality, sensitivity, complexity, interdependencies, and instability

- Determine the “breadth and depth of testing” required based on both traditionally risky areas and where the changes for this next release are planned

- Capture the strategic regression tests from the business/acceptance, technical/functional, ‘ilities/non-functional testing points of view in a formalized manner

If you already have comprehensive, well-defined, documented tests, then deliberately de-select less strategic tests that you used when testing new functionality. Your pared-down regression test suite will help you mitigate the risk of missing things while you continue to put focus on the new development in your testing effort.

It may feel a bit painful at first to “throw-away” some of those tests, but in truth you are really just setting them aside and focusing on the high priority tests that make the most business sense to re-execute on a regular basis.

It may feel a bit painful at first to “throw-away” some of those tests, but in truth you are really just setting them aside and focusing on the high priority tests that make the most business sense to re-execute on a regular basis.

Strategically Keeping Up

Even with thoughtful consideration of the tests for the regression suite, the number of tests and the time it can take to execute them can be significant. To help reduce the time for execution as well as reduce maintenance of test data and set-up/configuration costs, we can turn to test automation.

“Many people try to add automation to their projects, only to end up frustrated and annoyed. After one or two disastrous attempts, many just give up and stop trying. However, implementing automated testing is a basic cost-benefit analysis.”

“Automation is a method of testing, not a type of testing, and so should be applied to facilitate or accelerate those tests in the overall plan where there is a clear benefit for the project or the maintenance of the final system.”

– Pot-holes on the Road to Automation

The regression test suite should be automated where the business case of doing so makes sense. To help determine the actual ROI for this effort, be sure to take a system lifecycle view of the investment (not just a current project view):

- Automate where the business case is strong (eg: repetitive, difficult to do manually, data volume and/or set-up intensive, permutation volume)

- Selective creation of automated scripts based on high-use and high-risk functionality also helps maximizes ROI

- Use the previous build to automate tests to allow development of the scripts on a “stable” system and minimize rework

- Manually test the new functionality on a current build to actively remove defects and immediately add value while simultaneously identifying candidate automation scripts, data requirements, and dependencies for the next automation cycle

Summary

Is the risk tolerance of your project and organization so high that you can really afford not to have an efficient regression testing solution?

Reduce the level of risk in your system and organization by including a regression testing solution as part of your overall test strategy for any significant release. Design your regression test suite in such a way that you are able to perform different levels of regression testing while also reducing resource and turnaround requirements.

If you carefully feed and care for your regression test suite you will reap the benefit of continually increasing confidence in the stability and quality of your system through maximized test coverage and ease of execution within your constraints.