Recently the approach I have had to my testing has been heavily influenced by session-based test management. I have made a test plan consisting of a high-level list of test tasks. The testing has been exploratory, performed in sessions on a given topic, e.g. a function. I have two problems with this:

- As much as I like lists, they make bad test plans – at least to me. There are always too many tasks so the list will be too long, covering several pages in a document, making it hard to get an overview. It is also difficult to depict relationships. I have tried different groupings and headers, and managed to create nightmare layouts that are impossible to read. A list is also highly binary – either the task is done or not, there is no equivalent to “work in progress”.

- I would rarely be able to finish a session without interruption. Something urgent would come up and I would have to abort the session, and when restarting the conditions might have changed. As I discussed in the post on October 17th, I also tended to feel obliged to completing the session before I took on a new task, even though there might have been more important matters surfacing after the session started. In this situation it was of course hard to keep track of the status of the tasks.

The appeal of thread-based test management is of course that I can perform test tasks in parallel – it is not necessary to say that a test task is done. Instead I can scrape the surface of everything once and sort of work my way down to the insignificant details from there.

I have resolved to use a different approach for the next test period. This is what I have done so far:

- I have installed the open source mind map tool FreeMind

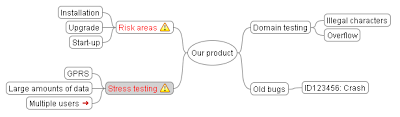

- I have created a mind map (I call it fabric) with

- Error reports

- Product heuristics

- Generic heuristics

- Since the fabric only contains short thread names, I have introduced knots that are (k)notes in simple text format that I link, or tie, onto the threads. The notes contain additional informtion such as hints, tips and reminders.

- I have compiled the stitch guide. The stitch guide provides guidelines on how I think that my project should use thread-based test management. The guidelines are not rules, but suggestions intended to promote consistency.

- I have a template for daily status reports. The report can contain anything I feel needs writing down during the day, but should at least contain the names of the threads that have been tested in some way. I am currently looking for a more fun name that “Daily status report”.

- The actual testing will of course be exploratory.

A fabric. Colours and icons are used to show priorities and status. The red arrow indicates that there is a knot tied onto the thread.

My plan is to use the first version of the fabric as my test plan. During the test period I will keep updating the fabric, and at the end of the test period the current status of the fabric will be my test report.

Note to the reader: This is my current interpretation of thread-based test management, and this constitutes my starting point. Hopefully it will evolve into something that I find useful, and turn out ot be an improvement compared to today. If not I will not hesitate to chuck it and try something new. I have big hopes though and cannot wait to get started!